Customer Data Privacy in Agentic Commerce: Compliance Frameworks and Agent Design Constraints

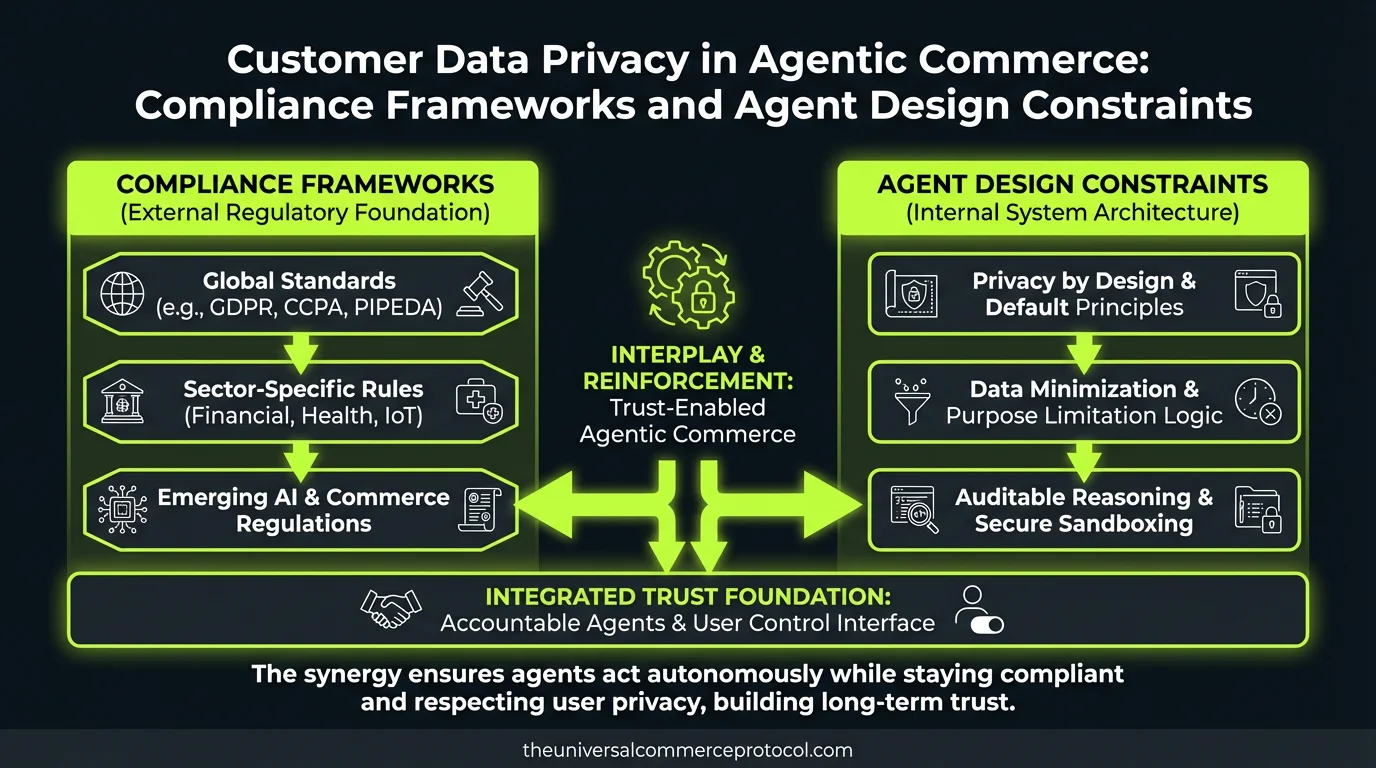

Agentic commerce systems process customer data at scale—browsing history, purchase patterns, payment details, location signals, and behavioral profiles. Unlike traditional checkout flows where data flows through predictable pipelines, agents make autonomous decisions using customer information in real-time, creating new compliance vectors that existing privacy frameworks were never designed to address.

The core problem: an autonomous agent making a purchase recommendation or applying a discount is processing personal data to make a consequential decision about an individual, which triggers GDPR Article 22 (automated decision-making) and CCPA deletion/opt-out rights in ways that are operationally complex for merchants and developers.

The Emerging Compliance Gap

No published post on this site addresses how agents interact with GDPR Article 22 (right to not be subject to automated decision-making), CCPA Section 1798.100 (right to know), or emerging regulations like Brazil’s LGPD Article 20 (automated decisions). The existing coverage focuses on payment security, fraud detection, and architecture—not data governance for agent systems.

Three specific gaps exist:

1. Agent Transparency and Explainability Under Law

When an agentic commerce system recommends Product A over Product B, or offers a personalized discount, the agent used customer data to make that decision. Under GDPR Article 22(3) and California’s emerging AI regulations, customers have the right to know why. Traditional e-commerce systems log this in conversion funnels. Agents log this in state vectors and reward functions, which are opaque to customers and often opaque to merchants.

2. Consent and Data Minimization at Agent Runtime

An agent that recovers abandoned carts or personalizes voice commerce recommendations needs access to prior purchase history, wishlist data, and browsing signals. But if a customer withdraws consent under GDPR Article 7, the agent’s state—its internal knowledge of that customer—remains. Purging agent memory without breaking agent performance is an unsolved operational problem.

3. Right to Deletion and Agent Retraining

A customer exercises their GDPR right to be forgotten. Deleting their row from the customer database is straightforward. But if that customer’s transaction history was used to train the agent’s decision-making model (via reinforcement learning or supervised fine-tuning), deleting them requires model retraining—an expensive operation that most SMB merchants cannot perform monthly or weekly.

How Regulations Apply to Agent Systems

GDPR Article 22: Automated Decision-Making

The regulation states: “The data subject shall have the right not to be subject to a decision based solely on automated processing, including profiling, which produces legal or similarly significant effects.”

A cart recovery agent that applies a dynamic discount to a customer based on their abandonment likelihood is making an automated decision with financial effect. Under Article 22, that customer can request human review. Most agentic commerce platforms today have no mechanism to pause an agent decision and escalate to a human merchant for review.

CCPA Section 1798.100: Right to Know

California requires businesses to disclose the personal information collected, the sources of that information, and the purposes for which it is used. If a voice commerce agent uses a customer’s location data to recommend same-day delivery, the merchant must disclose that use. But the merchant may not have visibility into what data the agent actually accessed during that transaction.

LGPD Article 20 (Brazil) and Emerging AI Regulations

Brazil’s data protection law includes a specific right to information about automated decision-making. The EU AI Act (Title III) treats agentic systems making commercial recommendations as high-risk and requires human oversight, risk documentation, and performance monitoring.

Compliance Design Patterns for Agentic Commerce

Pattern 1: Data Access Logging in Agent Prompts

Require agents to explicitly log which customer data fields they accessed during a decision. Instead of an agent with implicit access to all customer attributes, constrain the agent to a declared set of data inputs:

Allowed data inputs: purchase_history (last 90 days), cart_items, device_type, session_duration. Forbidden: email, phone, address, payment method.

This creates an audit trail that satisfies GDPR transparency requirements and can be shared with customers on request. Anthropic’s documented agent frameworks already support this via explicit tool definitions; extend them to include data classification.

Pattern 2: Consent-Aware Agent Gates

Build consent checks into the agent’s decision loop. Before accessing email marketing data or location signals, the agent checks the customer’s consent record. If consent is withdrawn, the agent falls back to a baseline recommendation (best-seller, seasonal, price-based) that does not require personalization.

Example: If customer.consent.behavioral_tracking == false, recommend products from bestseller list rather than collaborative filtering results.

Pattern 3: Agent Sandboxing for Model Training

Separate the agent’s real-time decision model from the agent’s training corpus. Real-time agents operate on encrypted or tokenized customer identifiers, not raw PII. Training data is anonymized before fine-tuning. This approach is labor-intensive but is becoming standard in regulated industries (financial services, healthcare).

Pattern 4: Human-in-the-Loop for High-Impact Decisions

For decisions that produce “legal or similarly significant effects” (GDPR Article 22), require human review before execution. A cart recovery agent can recommend a discount, but a human merchant must approve discounts above 20% before the agent applies them. This satisfies the right to human review.

Pattern 5: Deletion-Safe Agent Architectures

Use model-agnostic explanability (SHAP, LIME) or post-hoc rule extraction to identify which training examples contributed most to a model’s decision. When a customer requests deletion, prioritize removing their data from the agent’s training set using machine unlearning techniques (approximate or exact, depending on regulatory tolerance).

Tools emerging in this space: Katalyst AI, Microsoft AI Audit, and open-source frameworks like Alibi Explain are beginning to address deletion under GDPR, but they are not yet integrated into agentic commerce platforms.

Implementation Checklist for Merchants

- Data Inventory: Map all customer data accessed by your agents (real-time and training-time).

- Consent Management: Integrate your agent with a consent management platform (OneTrust, TrustArc) and test agent behavior when consent is withdrawn.

- Audit Logging: Log every data access and agent decision. Make logs queryable by customer ID.

- Transparency Statements: Publish documentation on how your agents use customer data. Provide a one-click download of all data accessed about a given customer (GDPR Article 15).

- Deletion Procedure: Define a process for deleting customer data from agent models (even if approximate) and communicate timelines to customers.

- Human Review Workflows: Identify decisions that require human oversight and implement approval gates.

- DPA Updates: Ensure your Data Processing Agreements with payment processors, fulfillment providers, and LLM vendors (OpenAI, Anthropic, Google) include agentic commerce use cases and confirm liability allocation.

Emerging Vendor Solutions

Stripe’s Privacy Controls (announced Q4 2025) include opt-out APIs for behavioral tracking in payment flows. Merchants using Stripe’s agentic commerce APIs can now disable recommendation agents for customers with privacy preferences on file.

Shopify’s Consent Framework for Agents (beta, March 2026) integrates Shopify Consent API with Shopify Flow automation, allowing merchants to gate agent behaviors by customer consent attributes.

Anthropic’s Constitutional AI for Commerce embeds privacy constraints directly into model prompts, allowing merchants to declare data policies without code.

FAQ

Q: Does an agentic commerce system always trigger GDPR Article 22?

A: Not necessarily. A recommendation agent does. A simple search agent that ranks products by keyword match does not. The key test: does the agent make a decision that materially affects the customer’s rights or interests (price, eligibility, recommendation weight)? If yes, Article 22 likely applies. Consult a DPA (Data Protection Authority).

Q: Can I delete a customer’s data if it was used to train my agent model?

A: Not immediately. Removing a customer from a trained model requires retraining (full or approximate) or machine unlearning. GDPR does not yet specify how fast deletion must occur for model-trained data, but regulators expect good-faith effort. Timeframes of 30-90 days are emerging as acceptable in practice.

Q: Do I need explicit consent to use customer data in an agent’s real-time decision?

A: Under GDPR, yes—unless the processing is necessary for contract performance or your legitimate interest. Under CCPA, yes for opt-out states. Under LGPD, yes with limited exceptions. Default to explicit consent and provide easy opt-out.

Q: Who is liable if an agent makes a discriminatory recommendation (e.g., showing lower prices to one demographic)?

A: The merchant is liable under GDPR, CCPA, and fair lending laws. The AI vendor (OpenAI, Anthropic, Google) shares liability only if the vendor trained or fine-tuned the agent with biased data. Document your training data and audit agent outputs regularly.

Q: Can I use anonymized customer data to train agents without consent?

A: Only if anonymization is true (irreversibly de-identified). GDPR regulators have rejected most anonymization claims in practice. Assume you need consent unless you have a specific legal opinion.

Q: How do I explain an agent’s decision to a customer who requests it?

A: Implement agent logging that captures inputs and outputs. Offer customers a plain-language summary: “We recommended this product because customers with your purchase history also bought it.” Avoid technical jargon about weights, embeddings, or reward functions.

Looking Ahead

The regulatory environment is hardening. The EU AI Act (enforcement begins 2025-2026) explicitly classifies agentic commerce as high-risk. US regulators are beginning enforcement under FCRA (Fair Credit Reporting Act) for agents that make credit or pricing decisions. Brazil’s ANPD (data protection authority) has issued guidance on LGPD compliance for AI systems.

Merchants and developers who embed privacy compliance into agent architecture today will avoid costly retrofits and regulatory fines later. Privacy is not a friction cost—it is a design requirement.

Frequently Asked Questions

What is the Universal Commerce Protocol (UCP)?

The Universal Commerce Protocol (UCP) is an open standard developed to enable AI agents to autonomously conduct commerce transactions across any platform.

How does UCP enable agentic commerce?

UCP provides standardized APIs and protocols so AI agents can discover products, negotiate terms, and complete purchases without human intervention, working across any compatible commerce platform.

Why should businesses implement UCP?

UCP adoption reduces integration costs, opens revenue channels to AI-driven buyers, and future-proofs commerce infrastructure as agentic purchasing becomes mainstream.

Leave a Reply