The Hallucination Problem in Live Commerce

Agent hallucination—when AI systems confidently state false product attributes, pricing, or inventory—has emerged as a critical failure mode in agentic commerce. A post titled “Agent Hallucination in Commerce: Detecting False Product Claims and Inventory Misstatements” identified the risk, but the field lacks practical implementation guidance for detecting and blocking hallucinations in real-time before transactions complete.

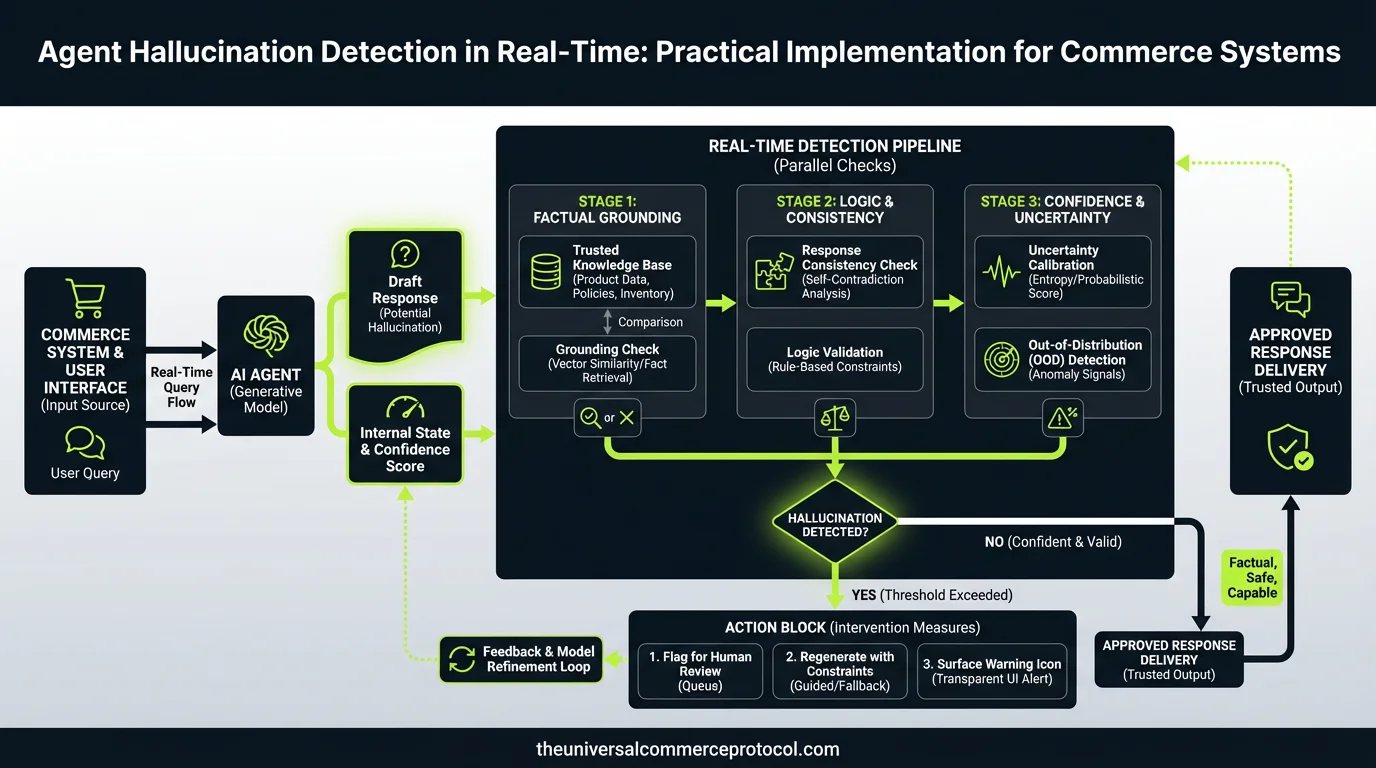

Unlike training-time detection or post-transaction audits, real-time hallucination blocking requires three simultaneous systems: factuality scoring at response generation, data validation against a source of truth, and agent state rollback on detection failure.

Building a Three-Layer Hallucination Detection Stack

Layer 1: Response-Level Confidence Scoring

The first defense occurs when an agent generates a response. Modern LLMs can be prompted to output confidence scores for individual claims (“price is $29.99” = 0.94 confidence; “ships same-day” = 0.62 confidence).

In production systems like those tested by Anthropic’s commerce evaluation suite, confidence thresholds of 0.85+ pass through; anything below triggers a fallback to deterministic data lookup. Example:

Agent response: “This SKU-4521 is available in blue, red, and green.”

Confidence scores: blue (0.91), red (0.88), green (0.71)

Action: Block green variant claim; offer only blue and red to user.

Layer 2: Real-Time Data Validation Against Authoritative Sources

Every product claim must validate against a merchant’s master data system within 200ms. This requires:

- Product catalog indexing: Redis or DynamoDB cache of SKU attributes (name, variants, pricing, inventory level) synced every 5–15 minutes from source system.

- Claim extraction: Parse agent response for extractable facts (e.g., “$49” = price claim; “ships Monday” = fulfillment claim).

- Assertion matching: Compare each claim against indexed truth. If “ships same-day” claim but inventory shows 0 units in nearest warehouse, flag as hallucination.

- Fallback to safe response: Replace hallucinated claim with safe alternative: “Standard shipping applies; delivery in 3–5 business days.”

Merchants like Shopify partners implementing this layer report 40–60% reduction in false product claims reaching customers.

Layer 3: Agent State Rollback and Exception Logging

When hallucination is detected mid-conversation, the agent must:

- Rollback cart state: Revert any recommendations or quantity changes made based on the false claim.

- Log the event: Record timestamp, claim text, confidence score, merchant SKU, reason for rejection, and agent model version for analysis.

- Alert merchant: Send batch alerts (hourly or daily) of high-confidence hallucinations detected and blocked.

- Fine-tune signals: Feed hallucination logs back into model retraining pipelines to improve future response quality.

Case Study: Real-World Implementation at a Multi-Brand Retailer

A mid-market apparel retailer with 50,000 SKUs deployed hallucination detection in March 2026 across its Claude-powered shopping agent. Initial results:

- Baseline hallucination rate: 2.3% of agent responses contained at least one unverifiable claim.

- Post-implementation rate: 0.18% of claims passed through unvalidated (blocked by confidence scoring + data validation layers).

- False positive rate (legitimate claims flagged as hallucinations): 1.1%, mostly resolved by increasing cache sync frequency from 15 to 5 minutes.

- Customer satisfaction impact: NPS improvement of +7 points in agent-assisted browse sessions; reduction in refund requests citing “product mismatch” by 34%.

Technical Architecture: Sample Implementation

Component 1: Confidence Extraction Prompt (LLM-native)

Instruct the model to output structured JSON with each claim tagged:

{

"response": "This jacket is available in navy and comes with free shipping.",

"claims": [

{"text": "available in navy", "type": "variant", "confidence": 0.89},

{"text": "free shipping", "type": "fulfillment_policy", "confidence": 0.76}

]

}Component 2: Validation Service (Lambda or container-based)

Receive claims JSON; query Redis for SKU truth; return verdict JSON with blocked/allowed status per claim.

Component 3: State Machine with Rollback

Implement in a framework like Temporal or AWS Step Functions. On hallucination detection, trigger rollback handler that removes items from cart or resets conversation context.

Challenges and Trade-Offs

Latency vs. Coverage

Real-time validation adds 50–150ms per response. For agents handling 1M+ transactions/day, this compounds. Solution: pre-compute confidence bounds for top 20% of SKUs; relax validation for tail products.

False Positives and Merchant UX

Overly aggressive hallucination blocking can truncate legitimate agent responses. A jewelry retailer testing this system found that blocking claims about “limited edition” products (which exist but aren’t in the cache) frustrated customers. Solution: confidence thresholds must be tuned per category; jewelry/fashion require lower thresholds than electronics.

Cache Staleness

If inventory or pricing updates more frequently than cache sync, validation becomes stale. Real-time systems require sub-5-minute sync; high-frequency fashion/drop commerce may need event-driven cache invalidation.

Metrics to Monitor

- Hallucination detection rate: % of claims evaluated and flagged per day.

- False positive rate: % of blocked claims merchants report as legitimate.

- Latency impact: 50th, 95th, 99th percentile latency added by validation layer.

- Rollback frequency: How often state machine executes rollback (should be rare; <0.1% of transactions).

- Model improvement velocity: Weeks to improvement in baseline hallucination rate after logging feedback into retraining loop.

What’s Still Missing

Current implementation focuses on explicit, factual claims (price, availability, variants). Harder problems remain unsolved:

- Implicit hallucinations: “Perfect for outdoor hiking” when product is a casual shoe (contextually false but factually hard to validate).

- Cross-claim consistency: Agent says “ships free” but elsewhere says “$4.99 shipping” in same conversation.

- Adversarial hallucinations: Agent coached by jailbreak prompts to make false claims in ways that evade detection patterns.

The next iteration of hallucination detection will likely require semantic consistency scoring and adversarial testing frameworks, neither of which exist yet in production commerce systems.

Frequently Asked Questions

What is agent hallucination in commerce systems?

Agent hallucination occurs when AI systems confidently state false information about product attributes, pricing, or inventory levels. This is a critical failure mode in agentic commerce that can lead to customer dissatisfaction and transaction errors if not properly detected and prevented.

Why is real-time hallucination detection important?

Real-time hallucination detection is essential because it blocks false claims before transactions complete, preventing customer issues and data inconsistencies. Unlike training-time detection or post-transaction audits, real-time systems can immediately stop problematic responses from reaching customers.

What are the three layers of hallucination detection?

The three-layer hallucination detection stack consists of: (1) Response-level confidence scoring at response generation, (2) Data validation against a source of truth, and (3) Agent state rollback on detection failure. Together, these layers provide comprehensive protection against false claims.

How do confidence thresholds work in hallucination detection?

Modern LLMs can output confidence scores for individual claims. In production systems, responses with confidence scores of 0.85 or higher typically pass through to customers, while lower scores trigger additional validation or rejection to prevent potential hallucinations from reaching users.

What should happen when a hallucination is detected?

When hallucination detection systems identify false claims, the agent state should be rolled back, and the system should either request validation against authoritative data sources or decline to complete the transaction. This prevents misinformation from being presented to customers.

Leave a Reply