The Compliance Audit Gap in Agentic Commerce

While the industry has published extensively on agent observability, cost attribution, and fraud prevention, one critical gap remains: how merchants prove to regulators that their AI agents operated within legal bounds. Observability is internal visibility. Compliance auditing is external proof.

The difference matters because regulators—particularly those overseeing financial services, consumer protection, and payment networks—now require documented evidence that autonomous agents followed applicable rules. A merchant can have perfect internal observability and still fail an audit if transaction logs don’t demonstrate legal compliance.

What Regulators Are Actually Asking For

The Federal Trade Commission (FTC), Consumer Financial Protection Bureau (CFPB), and payment networks including Mastercard and Visa have begun issuing guidance on AI-driven commerce. The common thread: merchants must maintain auditable records that show agent decision-making aligned with regulations.

Key compliance areas regulators are examining:

- Fair Lending & Discrimination: Did the agent deny credit, services, or pricing to protected classes? Audit records must show decision inputs and outputs for each transaction.

- Truth in Lending (TILA): For agents making credit offers, were all required disclosures generated and delivered before purchase completion?

- COPPA (Children’s Online Privacy): If an agent interacted with users under 13, did it collect or use personal data? Transaction logs must prove age-gating worked.

- TCPA (Telemarketing): If agents initiate contact, did they maintain Do-Not-Call compliance? Call/message logs must show opt-out enforcement.

- State Consumer Protection Laws: Many states require proof that AI agents disclosed their machine nature before completing transactions. Some require opt-in consent before autonomous checkout.

The Three Layers of Compliance Audit Records

Layer 1: Transaction Intent & Authorization

This is the foundation. For every agent-initiated or agent-completed transaction, the audit record must document:

- The original user request or consent that triggered the agent

- Timestamp and unique transaction ID

- Agent model version and configuration active at transaction time

- Explicit user confirmation (if required by law) that an agent would complete the purchase

- Proof of identity verification (if applicable)

Example: A consumer asks, “Buy me the cheapest flights to Denver next month.” The audit log must show: user ID, exact request text, timestamp, agent version (e.g., “Claude 3.5 with commerce MCP v2.1”), and user confirmation that agent can book without further input.

Layer 2: Decision Rationale & Constraint Verification

This is where most merchants fail audits. Regulators want to see why the agent made each decision, not just that it did.

Your audit record must capture:

- Which regulatory constraints the agent evaluated (price cap, age gate, geographic restriction, etc.)

- Input data used in each decision (user profile, product attributes, terms, etc.)

- Model outputs and confidence scores

- Which constraint was the deciding factor if the agent rejected an option

- Any fallback or escalation logic triggered

Example: An agent refuses to sell alcohol. The audit log should show: “User age field: 16. Constraint evaluation: alcohol_age_gate = 21 (US law). Decision: REJECT. Escalation: human_review.” Not just: “Transaction denied.”

Layer 3: Disclosure & Consent Evidence

Many jurisdictions now require proof that users knew they were interacting with an agent. Your audit must document:

- When and how the user was told “this is an AI agent”

- What disclosures were shown (text, video, interactive consent modal)

- Timestamp of user acknowledgment

- If consent was required, proof user affirmatively opted in

- User’s ability to revoke agent permission (and proof they understood this option)

This is especially important for voice or conversational agents, where the line between chatbot and autonomous actor is ambiguous.

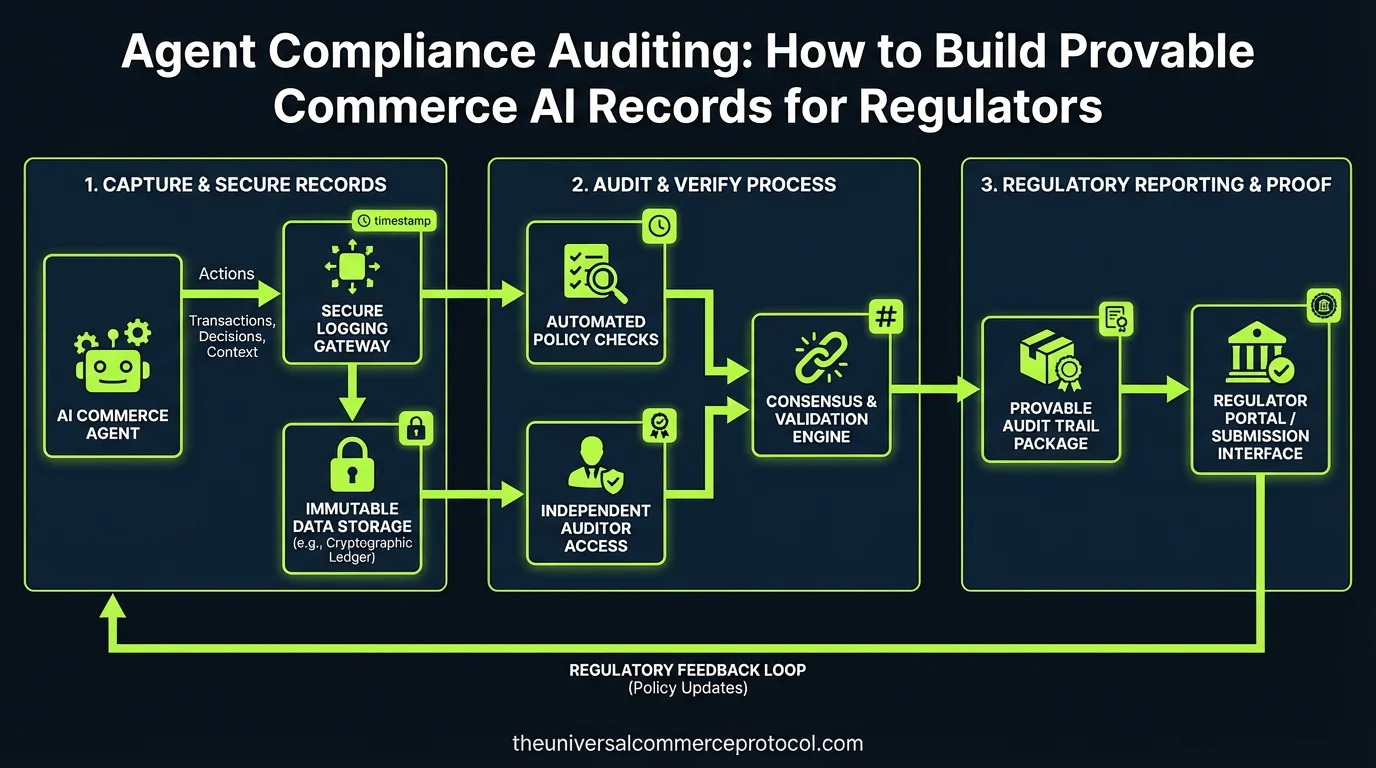

Technical Implementation: Building Auditable Agent Systems

Instrument Your Agent’s Decision Points

Rather than trying to audit agent behavior after the fact, design compliance checks into the agent itself. Use structured logging at decision gates:

{

"transaction_id": "txn_7f3e2a9c",

"timestamp": "2026-03-14T14:32:18Z",

"agent_version": "claude-3.5-commerce-v2.1",

"compliance_checks": {

"age_gate": {

"constraint": "product_requires_age_18",

"user_age_field": 34,

"passed": true

},

"geographic_restriction": {

"constraint": "shipping_allowed_us_only",

"user_location": "CA",

"passed": true

},

"price_cap": {

"constraint": "max_transaction_5000",

"proposed_total": 1299.99,

"passed": true

}

},

"decision_rationale": "All constraints passed. Agent authorized to proceed.",

"user_consent_token": "consent_xyz123_provided_2026-03-14T14:15:00Z"

}

This is not for internal observability—it’s for the regulator. Store these logs immutably (append-only database or blockchain-backed audit trail) so they can’t be modified retroactively.

Separate Audit Records from Operational Logs

Your observability logs (which help engineering debug agent performance) are different from compliance audit records (which prove to regulators you followed the law). Use different storage and retention policies:

- Operational logs: Can be deleted after 90 days; live in your internal data warehouse; used for monitoring and alerts

- Audit records: Must be retained per regulatory requirement (typically 3–7 years for financial transactions); stored in compliance-certified systems; encrypted and access-controlled

Use Structured Decision Logging, Not Narrative Logs

Regulators can’t audit narrative logs. (“Agent decided to approve” is not compliant; “Agent evaluated: age=34, threshold=18, result=PASS” is.)

Define a schema for every compliance-relevant decision your agent makes. Use enums, boolean flags, and numeric thresholds—not free-text reasoning.

What Happens If You Don’t Have Audit Records?

Recent CFPB settlements with fintech firms that deployed poorly-audited AI systems show the cost:

- 2025 CFPB action vs. Upstart (AI lending platform): $59 million fine for inability to prove AI lending model complied with fair lending rules. The agency cited “lack of adequate record-keeping” as a primary violation.

- FTC action vs. Amazon (ad algorithm): While not pure commerce, the precedent is clear: if you can’t prove to regulators that your algorithm didn’t discriminate, you’re liable even if you didn’t intend to discriminate.

For merchants deploying agents: a single audit failure can trigger formal investigation, mandatory algorithm audits, consent decrees requiring human review of all agent transactions, and substantial fines.

Compliance Auditing in Practice: Merchant Example

A mid-market retailer (annual revenue $50M) deploys an agent to handle clothing sales. The agent can:

- Recommend sizes based on body measurements (input via chatbot)

- Apply loyalty discounts automatically

- Complete the purchase

The audit system must track:

- Fairness gate: Is the size recommendation logic trained on diverse body types? (Regulators now care about algorithmic bias in sizing recommendations.) Log: which training data distribution was used, what recommendation was made, was it correct?

- Discount gate: Are loyalty discounts applied uniformly across demographics? Log: user_id, loyalty_tier, discount_amount, why this customer qualified.

- Disclosure gate: Did the user know an agent was completing the transaction? Log: disclosure_shown=true, timestamp, user_acknowledgment_token.

When a regulator asks, “Why did this customer get a different price?”, the merchant can pull the audit record and show: “Because they were loyalty tier 3 (which entitles them to 15% off all clothing), not because of age, race, or location.”

FAQ

Q: How long must I retain audit records?

A: It depends on transaction type. For general commerce: 3 years (FTC standard). For financial products (loans, insurance quotes): 7 years (federal banking standard). For COPPA violations: indefinitely if minors’ data was involved. Check your industry regulator’s guidance; when in doubt, retain for 7 years.

Q: Can I use my observability logs as audit records?

A: Not safely. Observability logs are designed for performance and debugging, not regulatory proof. They’re often compressed, sampled, or deleted. Audit records must be immutable, complete, and retained per regulation. Use separate systems.

Q: What’s the cost of building audit infrastructure?

A: For a mid-market merchant (10M+ transactions/year), expect $200K–$500K in engineering and infrastructure to build a compliant audit system. Ongoing compliance monitoring (via third-party audit) runs $50K–$150K annually. It’s a cost of doing business with agents.

Q: Does the agent need to explain its reasoning in natural language?

A: Regulators are split. The FTC prefers structured, machine-readable logs (easier to audit at scale). The CFPB is increasingly asking for human-readable explanations (for consumer-facing transparency). Best practice: log both—structured decision data for regulators, natural-language explanation for consumers and your own audit teams.

Q: What if my agent makes a mistake and I can’t prove why?

A: You’re liable. If a regulator finds that your agent (e.g.) charged a consumer twice and you have no audit record showing why, you must refund the consumer, pay the regulator’s fine, and likely undergo mandatory audits. Audit records are your legal defense; without them, you’re defenseless.

Q: Can I use blockchain for compliance audit records?

A: Yes, if implemented correctly. Immutable append-only ledgers (blockchain, Merkle trees, etc.) are ideal for audit records because they prove no data was retroactively changed. However, regulators still require that you can *query* and *explain* records in real time. A blockchain alone isn’t enough; you need indexing and retrieval infrastructure on top.

Q: Should I hire a third-party compliance auditor?

A: Yes, if you’re at scale (>$10M annual revenue) or operating in regulated verticals (fintech, healthcare commerce). A third-party audit firm can validate that your audit system meets regulatory standards and can testify to your compliance in disputes. Cost: $5K–$20K per annual audit, but invaluable if you face enforcement action.

Conclusion

Observability tells you how your agents performed. Compliance auditing tells regulators that your agents performed lawfully. As agentic commerce scales, regulators will shift from investigating individual merchants to demanding systemic compliance auditing infrastructure. Merchants who build audit systems now will avoid costly investigations later. Those who don’t will become test cases.

Frequently Asked Questions

Q: What is the difference between agent observability and compliance auditing?

A: Observability provides internal visibility into how AI agents operate and perform within your systems. Compliance auditing, however, creates external proof for regulators that your agents operated within legal bounds. While observability helps you understand agent behavior internally, compliance auditing demonstrates to regulators that autonomous agents followed applicable rules through documented evidence and transaction logs.

Q: Why do regulators require documented evidence of AI agent compliance?

A: Regulators—including the FTC, CFPB, and payment networks like Mastercard and Visa—now require merchants to maintain auditable records showing that autonomous agents made decisions aligned with regulations. This documentation is essential because a merchant can have perfect internal observability but still fail an audit if transaction logs don’t demonstrate legal compliance with applicable laws.

Q: What key compliance areas are regulators currently examining for AI-driven commerce?

A: Regulators are focusing on several critical areas including Fair Lending & Discrimination (ensuring agents don’t deny credit or services based on protected characteristics), consumer protection standards, payment network rules, and financial services regulations. Merchants must be able to prove through auditable records that their agents complied with these specific requirements.

Q: How can merchants prove their AI agents operated within legal bounds?

A: Merchants must build provable commerce AI records that document agent decision-making processes and outcomes. This involves maintaining comprehensive transaction logs, decision histories, and audit trails that demonstrate agents followed applicable regulations and made compliant decisions throughout their autonomous operations.

Q: What happens if a merchant has good observability but fails compliance auditing?

A: Even with excellent internal observability systems, a merchant can fail a regulatory audit if their transaction logs don’t demonstrate that agents operated legally. This is why compliance auditing specifically focuses on creating external proof of regulatory adherence rather than just internal visibility into agent operations.

Leave a Reply