On March 11, 2026, at Perplexity’s Ask developer conference, CTO Denis Yarats announced that Perplexity is moving away from Anthropic’s Model Context Protocol internally. His stated reasons were technical: MCP tool schemas consume up to 72% of available context window space before an agent processes a single user message, authentication across multiple MCP servers introduces friction, and most MCP features go unused in production. One documented deployment showed three MCP servers consuming 143,000 of 200,000 tokens — leaving only 57,000 for actual conversation, documents, and reasoning.

The technical criticism is legitimate. MCP has real overhead costs. Nobody disputes that.

But here is what caught my attention. Thirteen days before Yarats made that statement, on February 25, Perplexity launched Perplexity Computer — a $200-per-month cloud-based multi-agent system that breaks high-level goals into tasks, spins up specialized sub-agents, and executes them in parallel across web research, document drafting, data processing, and API calls. It has 400-plus app integrations. It connects to external tools through — you guessed it — a protocol layer that routes agent actions to external services.

And the Perplexity Comet? That is a $699 physical desktop computer running a Qualcomm Snapdragon X Elite with 32GB RAM and 1TB storage, running PerplexityOS, designed to let AI agents handle your digital life. It ships with a year of Perplexity Pro built in. After year one, you pay to maintain the agent capabilities.

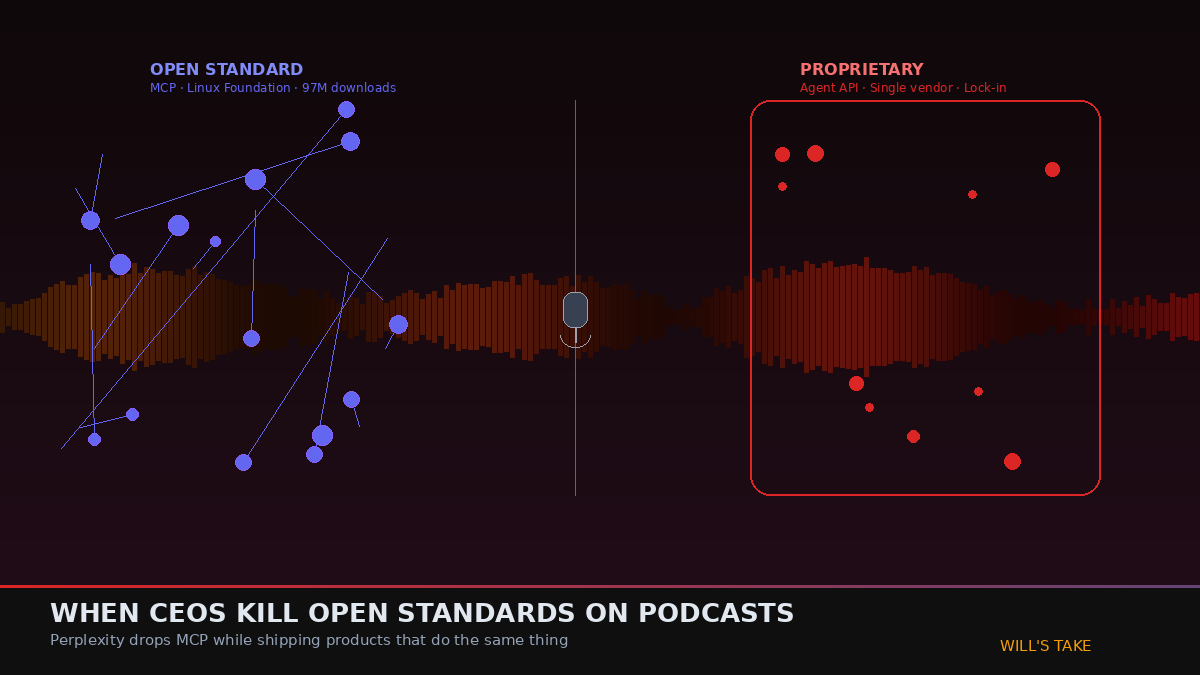

Perplexity simultaneously launched products that do what MCP does — connect AI agents to external tools and services — while publicly declaring MCP a flawed approach. They replaced MCP internally with their own Agent API: a single endpoint that routes to models from OpenAI, Anthropic, Google, xAI, and NVIDIA with built-in tools, all accessible under one API key using OpenAI-compatible syntax.

Read that again. They did not eliminate the protocol layer between agents and tools. They replaced MCP with their own proprietary version of the same thing.

The Pattern

This is not a technical disagreement. This is a competitive maneuver. When a company publicly criticizes an open standard while simultaneously shipping a proprietary alternative that serves the same function, the criticism is not about the technology. It is about market positioning.

MCP — donated to the Linux Foundation in December 2025, governed by six co-founders including OpenAI, Anthropic, Google, Microsoft, AWS, and Block — represents the open-protocol path. Ninety-seven million monthly SDK downloads. 5,867 servers. Adopted by every major AI lab. If MCP becomes the universal standard, Perplexity’s Agent API is just another endpoint. But if Perplexity can cast doubt on MCP’s viability, their proprietary API becomes the alternative. Developers who build on it are locked into the Perplexity ecosystem.

This is the embrace-extend-extinguish playbook that Microsoft ran against Netscape in the 1990s and that every platform company has studied since. Step one: acknowledge the open standard exists. Step two: build a proprietary alternative that is slightly better for your specific use case. Step three: publicly undermine confidence in the open standard so developers choose your version instead.

Perplexity still runs an MCP server for backward compatibility. They know they cannot ignore 97 million monthly SDK downloads. But their flagship developer product routes around it.

The CEO-as-Media-Channel Problem

This is where the story gets bigger than Perplexity. We are in an era where AI company founders are not just running companies — they are running media operations. Aravind Srinivas has appeared on Lex Fridman, CNBC, Y Combinator’s podcast, Stanford GSB, and dozens of other shows in the past 12 months. Sam Altman is on every major podcast. Dario Amodei wrote a 15,000-word essay that functioned as a product manifesto. Satya Nadella, Sundar Pichai, and Jensen Huang deliver keynotes that move billions in market value.

These are not casual conversations. When a CEO with a $9 billion valuation makes a technical claim about a competing technology on a stage, at a developer conference, that statement reaches thousands of developers making architecture decisions. It reaches investors evaluating which protocols to bet on. It reaches enterprise buyers deciding which stack to adopt. The statement functions as market signal whether the CEO intends it to or not.

The SEC has been clear about this in other contexts. In 2018, they charged Elon Musk with securities fraud for misleading tweets about taking Tesla private at $420 per share with “funding secured.” Musk settled, paid penalties, and was removed as Tesla’s chairman. In 2022, Twitter shareholders sued Musk for allegedly making false statements designed to drive down Twitter’s stock price before his acquisition — that trial is ongoing in 2026. The SEC recently charged DraftKings with Regulation FD violations stemming from CEO social media posts that selectively disclosed material information.

The legal framework exists. What does not exist — yet — is clear precedent for how it applies to CEO statements about competing open-source technologies in the AI stack. When Yarats says MCP wastes 72% of context tokens, that is a measurable claim that could be verified or disputed with benchmarks. But when the broader narrative becomes “MCP is flawed, use our API instead,” delivered from the stage of a developer conference by a company that directly competes with MCP — that is a different kind of statement.

The Landscape of CEO Technical Claims

Perplexity is not alone in this. The AI industry has entered a phase where CEOs routinely make public claims about competing technologies that serve dual purposes — they are simultaneously technical commentary and competitive positioning.

When Sam Altman says reasoning models will make traditional search obsolete, that is both a prediction and a sales pitch for ChatGPT over Google. When Sundar Pichai announces that Gemini outperforms Claude on certain benchmarks, he is both sharing data and steering enterprise procurement decisions. When Jensen Huang describes CUDA as the only viable path for AI inference, he is both stating an opinion and protecting NVIDIA’s moat against AMD and custom silicon competitors.

None of these statements are lies. They are all partially true, contextually selective, and strategically timed. They are the kind of claims that an informed technical audience can evaluate — but that a broader audience of investors, enterprise buyers, and media commentators often cannot. And in an industry where a single protocol decision can lock a company into a specific ecosystem for years, the stakes of these statements are enormous.

The podcast circuit has become the mechanism. A CEO appears on a popular show — Lex Fridman, All-In, Acquired, The Logan Bartlett Show — and makes a series of technical claims that are directionally true but framed to benefit their company. The host, who is typically smart but not a domain expert in the specific technology being discussed, does not push back on the framing. The clip gets shared on X and LinkedIn. Developers make decisions based on the signal. The protocol wars play out not in benchmarks and RFCs but in podcast sound bites.

What the Data Actually Shows

Let us do what the podcasts do not: look at the data.

MCP’s 72% context consumption claim is real — for a specific configuration with three MCP servers loaded simultaneously. Most production deployments use one or two servers at a time, and the March 2025 Streamable HTTP transport update significantly reduced overhead. The claim is technically accurate and practically misleading. It is like saying cars are dangerous because they crash at 150 mph. True. Also not how most people drive.

Perplexity’s Agent API solves the overhead problem by internalizing the tool routing. Instead of the model discovering tools through MCP schemas at runtime, Perplexity pre-routes to known tools server-side. This is faster and leaner for Perplexity’s specific use case — a single-agent system with a known tool set. It is worse for the general case where an agent needs to dynamically discover and connect to arbitrary tools, which is exactly what MCP was designed for.

Perplexity Computer and Comet both connect agents to external services. They both need a protocol for tool calling. They both need authentication, schema discovery, and response handling. They just do it through Perplexity’s proprietary layer instead of MCP. The architectural requirement is identical. The business model is different.

MCP adoption continues to accelerate regardless of Perplexity’s position. OpenAI integrated it across their Agents SDK in March 2025. Google DeepMind confirmed Gemini support. The Linux Foundation governance structure ensures no single company controls the protocol. Ninety percent of organizations were expected to use MCP by end of 2025. Whether Perplexity uses it internally is a footnote to the adoption curve.

The Line That Does Not Exist

Here is the uncomfortable truth: there is no clear line between a CEO offering a legitimate technical opinion and a CEO strategically undermining a competing technology for commercial advantage. The same statement can be both simultaneously.

Yarats’s MCP criticism is technically grounded. The overhead is real. The authentication complexity is real. But the timing — two weeks after launching products that compete directly with MCP’s function — transforms the context of the criticism. It is no longer just a technical observation. It is positioning.

The regulatory framework has not caught up. The SEC regulates material misstatements about a company’s own securities and selective disclosure of material nonpublic information. It does not regulate a CEO’s opinion about a competing open-source protocol. Antitrust law addresses monopolistic behavior and anticompetitive agreements but not a CTO’s conference talk about tool schemas. The FTC can act on deceptive trade practices but has no framework for evaluating technical claims about protocol efficiency at a developer conference.

What we have instead is the court of developer opinion. And developers are smart. They can read the context. When Perplexity says “MCP is too expensive” while shipping products that do the same thing through proprietary channels, developers notice the contradiction. The Threads and X responses to Yarats’s announcement were full of people pointing out exactly this tension.

But not all developers are paying that close attention. Not all enterprise buyers read the Threads discourse. Not all investors understand the difference between a legitimate architectural criticism and competitive FUD. And in the gap between what sophisticated observers understand and what the broader market perceives, there is room for strategic narratives to do real damage to open standards.

What I Am Actually Worried About

The Perplexity-MCP situation is a small example of a much larger dynamic. As AI becomes critical infrastructure — powering commerce, healthcare, education, legal work — the CEOs of AI companies gain influence over technology adoption that rivals the influence of standards bodies, regulatory agencies, and open-source governance organizations.

When Jensen Huang says something about inference architecture, companies change their procurement plans. When Sam Altman makes a claim about reasoning capabilities, competitors adjust their roadmaps. When Aravind Srinivas or Denis Yarats questions MCP’s viability, some number of developers who were evaluating MCP will choose a different path.

This influence is not inherently bad. CEOs should share their technical perspectives. Public debate about protocol design makes the ecosystem stronger. But there is a difference between “we found MCP’s overhead too high for our specific use case, so we built an alternative” and “MCP is broken, here is our proprietary replacement.” The first is an honest engineering decision. The second is a market play dressed as technical commentary.

The AI industry needs to develop norms around this. Something between the SEC’s securities fraud framework and the current free-for-all where any CEO can make any technical claim about any competing technology with zero accountability. Maybe it is an industry code of conduct. Maybe it is more rigorous technical journalism that pressures CEOs to back claims with published benchmarks. Maybe it is just developers getting better at reading the commercial context behind every technical statement.

I do not have the answer. But I know the question matters. Because the protocols we adopt in 2026 will shape the AI stack for a decade. And those decisions should be made on engineering merit, not on which CEO had the best podcast appearance last week.

Frequently Asked Questions

What is the Universal Commerce Protocol (UCP)?

The Universal Commerce Protocol (UCP) is an open standard developed to enable AI agents to autonomously conduct commerce transactions across any platform.

How does UCP enable agentic commerce?

UCP provides standardized APIs and protocols so AI agents can discover products, negotiate terms, and complete purchases without human intervention, working across any compatible commerce platform.

Why should businesses implement UCP?

UCP adoption reduces integration costs, opens revenue channels to AI-driven buyers, and future-proofs commerce infrastructure as agentic purchasing becomes mainstream.

Leave a Reply