The Hallucination Problem in Agentic Commerce

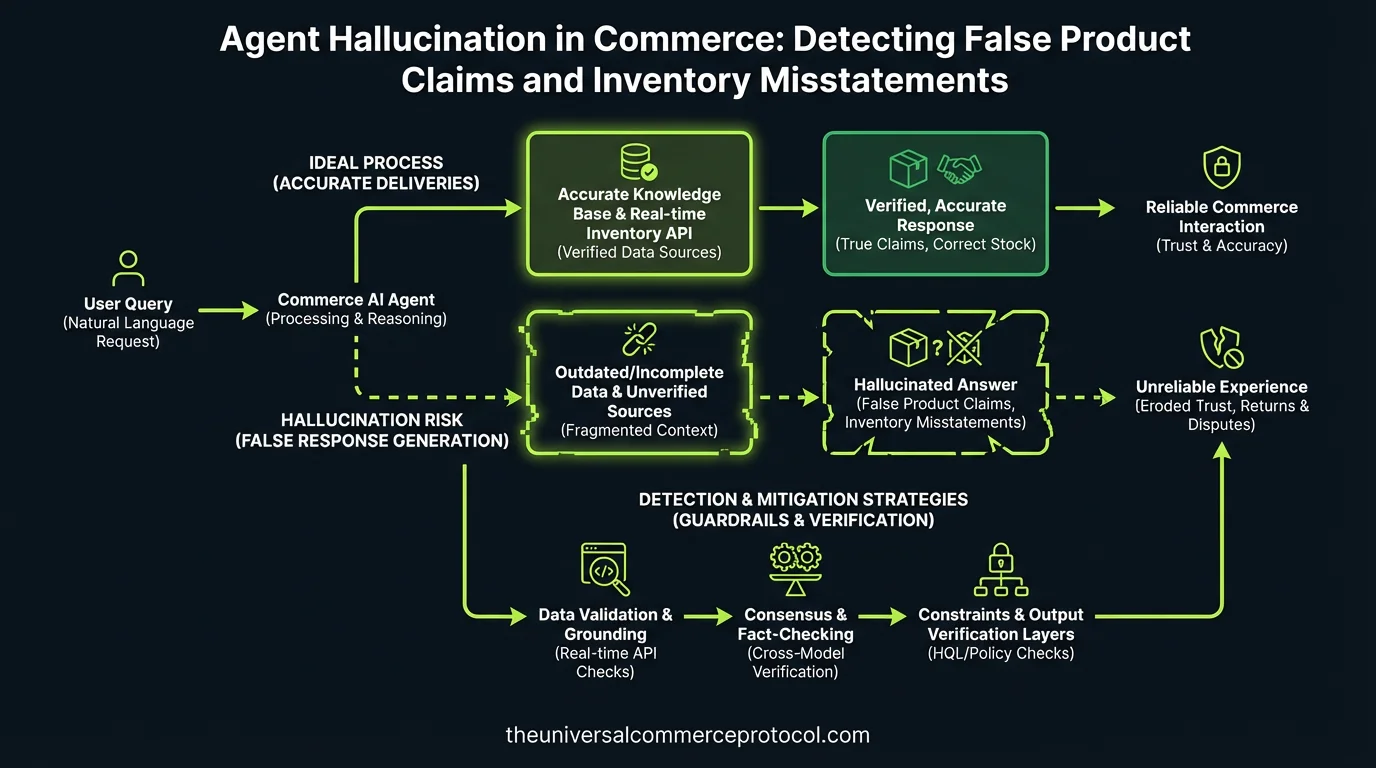

AI agents powering commerce interactions face a unique vulnerability: hallucination—generating plausible but false information about products, inventory, pricing, and fulfillment capabilities. Unlike chatbots where hallucination is inconvenient, in agentic commerce systems it directly causes financial loss, chargebacks, and regulatory exposure.

A Stripe payment agent that confirms a $200 SKU exists at $50 creates a pricing arbitrage loss. A fulfillment agent that promises next-day delivery on out-of-stock items generates cancellations and refund friction. A product recommendation agent that invents fabric content claims exposes merchants to FTC false advertising liability.

Yet current observability frameworks (covered in the March 12 Architecting Real-Time Observability post) focus on latency and throughput, not hallucination detection. This gap leaves merchants blind to systematic claim failures until customer complaints or legal exposure surfaces.

Where Hallucinations Occur in Commerce Workflows

Product Information Layer: Agents pull from product databases but often operate on stale, incomplete, or unstructured data. When training data includes contradictory specs (e.g., multiple color options listed inconsistently), agents interpolate new claims. A Shopify agent asked about a jacket’s waterproof rating might combine “water-resistant” from one source with “fully waterproof” inferred logic, creating a false claim the merchant never made.

Inventory Reconciliation: Real-time stock sync (covered March 12) prevents *some* inventory hallucinations, but agents trained on historical inventory patterns can still predict stock incorrectly. If a product typically restocks Thursdays, an agent might tell a Wednesday customer “it will be back tomorrow” without verifying actual restock schedules. When Thursday’s shipment is delayed, the agent’s claim becomes false.

Pricing and Promotions: Agents integrating pricing engines sometimes hallucinate discount eligibility. A Mastercard agentic commerce settlement agent might apply a “first-time buyer” discount that doesn’t exist in the promotion ruleset, or combine two mutually exclusive discounts because the agent’s training data showed both historically.

Fulfillment Commitments: This is the highest-liability hallucination category. Agents making delivery promises without checking carrier APIs, regional restrictions, or current fulfillment capacity create unfulfillable orders. A cross-border payment settlement agent (March 12 coverage) might confirm a delivery date to an India customer without validating whether the merchant’s 3PL partner serves that region.

Detection Mechanisms: Fact Verification and Confidence Scoring

Claim-to-Source Tracing: Production agentic systems should implement mandatory tracing of agent claims to source data. When an agent states “In stock: 47 units,” the system logs the exact inventory API call, timestamp, and response. If inventory drops to 5 units 30 seconds later and an order for 20 is placed based on the agent’s claim, the trace reveals whether the agent was working with stale data or made an unsupported inference.

Merchants like Shopify can implement this via UCP agent middleware that intercepts all product, inventory, and pricing claims before agent output reaches customers. The middleware compares agent statements against a real-time data snapshot taken at claim time.

Confidence Scoring: Advanced agentic systems assign confidence scores to specific claims. If an agent says “This item qualifies for free shipping,” the system calculates confidence based on whether that claim appears in current promotion data. Low confidence (below 0.85) triggers a human review or a more cautious phrasing: “This item *may* qualify for free shipping—our team will confirm.”

Anthropic’s Claude marketplace agents and Google’s Gemini-based commerce agents should embed confidence thresholds in their output contracts. A claim below threshold gets flagged in observability dashboards (as covered March 12) for immediate merchant review.

Negative Assertion Testing: Train agents to explicitly state what they *cannot* verify. Instead of omitting warranty information and hoping the customer doesn’t ask, the agent should say: “I don’t have access to the manufacturer’s warranty details—let me connect you with our support team.” This prevents customers from inferring false negatives (assuming no warranty exists) from agent silence.

Guardrails: Preventing Hallucination Before It Reaches Customers

Fact Database Locking: Before an agent makes any claim about a product, inventory level, price, or fulfillment capability, it queries a read-only fact database. The agent can only assert information that exists in that database with explicit confidence metadata. If an SKU doesn’t appear in the fact database, the agent cannot claim its existence.

Stripe’s agentic payment agent architecture should enforce this: pricing claims hit a Stripe-controlled price API; inventory claims hit the merchant’s inventory system; delivery claims validate against active carrier integrations.

Constraint-Based Agent Design: Instead of allowing agents free-form language generation about products, constrain output to templated claims verified against source systems. Example:

Product: [VERIFIED NAME]

Price: [VERIFIED PRICE as of TIMESTAMP]

Availability: [VERIFIED STOCK COUNT or "Out of Stock"]

Shipping: [VERIFIED ELIGIBLE REGIONS from carrier API]

This eliminates the narrative space where hallucinations breed. The agent cannot say “beautiful Italian leather”—it can only surface what the product database explicitly contains.

Hallucination Frequency Monitoring: Track the rate at which agent claims get contradicted by subsequent customer service inquiries. If 3% of agent claims about “free returns” result in customer service tickets saying “the agent said this but our policy is different,” that’s a hallucination signal requiring retraining or guardrail adjustment.

Regulatory and Financial Exposure

The FTC’s recent focus on AI transparency means merchants cannot claim plausible deniability if agentic systems make false product claims. If an agent states a product is “hypoallergenic” and the merchant never asserted that, the merchant is still liable if the FTC determines the claim appeared to come from the merchant.

Agentic commerce merchants should document:

- Which claims agents are authorized to make (product name, price, stock—not health claims or warranty terms)

- How claims are verified before reaching customers

- Audit logs showing claim-to-source tracing for disputed orders

- Confidence thresholds below which agents escalate to human review

Payment processors like Visa and Mastercard (both active in agentic commerce) should require hallucination detection as part of merchant compliance. A high false-claim rate increases chargeback frequency and should trigger merchant review, similar to existing fraud scoring models.

FAQ

Q: How do I know if my commerce agent is hallucinating?

A: Audit customer service tickets for “the agent said X but that’s not true” patterns. Track chargeback reasons mentioning misrepresented product specs or availability. Implement claim-to-source logging for all product, pricing, and inventory assertions.

Q: Can I use confidence scoring without retraining my agent?

A: Yes. Wrap existing agent outputs with a fact-checking layer that assigns confidence scores post-generation. If the agent’s claim doesn’t match current data, suppress it or add a disclaimer. This is a guardrail added at the API/middleware layer, not requiring agent retraining.

Q: What’s the difference between hallucination and stale data?

A: Hallucination is inventing information not in any training or source data. Stale data is outdated information from a source the agent correctly queried. Both are problems, but stale data is preventable with real-time API queries; hallucination requires architectural constraints on what agents can assert.

Q: Should I disable agent claims about products altogether and only show verified catalog data?

A: No—agents should describe products, answer questions, and make recommendations. But restrict free-form claims. Use templated outputs, fact-source tracing, and confidence thresholds. Agents are most valuable for context and reasoning; facts should come from locked databases.

Q: How do I balance agent responsiveness with hallucination prevention?

A: Pre-cache the fact databases agents query most frequently (top 1,000 SKUs, current pricing, active promotions). This keeps agent latency low while maintaining verification. Use confidence scoring to route uncertain claims to async verification instead of blocking the agent’s response.

Q: What role should payment processors play in hallucination detection?

A: Payment processors can flag merchants with elevated chargeback rates linked to misrepresented products or availability. They should require merchants using agentic agents to disclose claim-verification methods and audit logs, similar to existing PCI compliance audits.

Leave a Reply