Last week I wrote about Claude Cowork as Anthropic’s edge computing play. The piece focused on the architectural shift — moving execution from cloud to client. But there is a second-order consequence I keep thinking about, and it is potentially bigger than the architecture itself: what happens to the power grid if the Cowork model wins?

The Numbers That Should Make Everyone Uncomfortable

Global data center electricity consumption is projected at 1,050 TWh by 2026. To put that in context, that is roughly the total electricity consumption of Japan. AI now accounts for approximately 15% of that — up from 2-3% in 2023. A single ChatGPT query consumes up to 10 times more energy than a Google search. Generative AI queries alone are estimated to consume 15 TWh in 2025, scaling to 347 TWh by 2030. Inference — not training — now represents 80-90% of AI compute demand, and inference spending crossed 55% of all AI cloud infrastructure at $37.5 billion in early 2026, surpassing training costs for the first time.

The grid is already buckling. Average US electricity prices hit 19 cents per kWh by end of 2025. Bloomberg reported data centers are sending power bills soaring. CNBC confirmed electricity prices are rising at double the rate of inflation, with data center demand meaning no relief ahead. Goldman Sachs projects this pressure continues through 2028 at minimum. An average large AI-focused data center consumes as much electricity as 100,000 households, and the largest facilities under construction will require 20 times more.

These are not abstract numbers. Real people are paying more for electricity because AI companies are consuming power at a pace utilities never planned for. Every AI query that runs in a data center contributes to this pressure. Every query that does not, relieves it.

The Edge Arithmetic

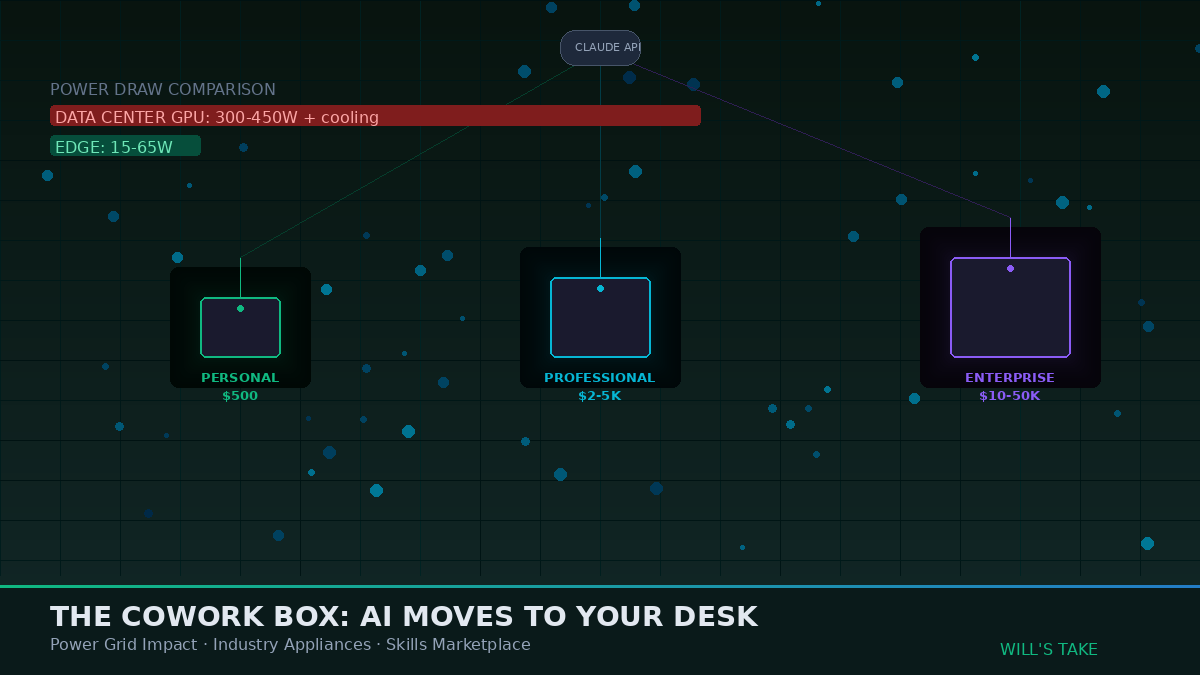

Here is where the Cowork model gets interesting from a power perspective. When Claude Cowork runs a script on your laptop, iterates on a document, processes a spreadsheet, or manipulates files, that work happens on your local CPU drawing 5-65 watts depending on load. An Apple M4 Mac Mini idles at under 5 watts and peaks at around 65 watts under heavy LLM inference. An NVIDIA A100 GPU in a data center draws 300-450 watts doing equivalent work — before you add cooling, networking, storage, and facility overhead that typically doubles the raw compute power draw.

The efficiency gap is not marginal. Modern ARM processors and specialized AI accelerators at the edge consume approximately 100 microwatts for inference operations that cost 1 watt in the cloud — a 10,000x efficiency advantage for certain workload types. Even for heavier tasks, edge AI processing typically costs 40-60% less than cloud for high-volume inference workloads after initial hardware investment. Hybrid edge-cloud architectures show energy savings up to 75% and cost reductions exceeding 80% compared to pure cloud processing.

The Cowork model does not eliminate cloud inference — the model still reasons on Anthropic’s GPUs. But it eliminates the execution overhead. If Claude iterates 50 times on a local script instead of making 50 API round trips, that is 50 inference calls that never happen. At scale across millions of Cowork users, each avoiding dozens of unnecessary cloud round trips per session, the aggregate power savings become substantial. Not enough to solve the energy crisis alone, but enough to meaningfully bend the curve on inference demand growth.

What If Cowork Was a Physical Product?

This is the thought experiment I cannot stop running. Today, Cowork is software — a lightweight Linux VM running inside Claude Desktop on your Mac or Windows machine. It uses whatever CPU you already have. But the hardware industry is already building the components that would make a dedicated Cowork appliance not just possible but economically compelling.

Qualcomm shipped its AI On-Prem Appliance Solution in January 2025 — a desktop or wall-mounted unit that runs generative AI, LLMs, and real-time analytics entirely on-premises. It supports models up to 120 billion parameters. It handles synthetic data generation, model fine-tuning, and inference locally. Enterprise and government deployments began Q2 2026. NVIDIA’s Jetson Thor series delivers up to 2,070 FP4 TFLOPS of AI compute with 128 GB of memory at 40-130 watts — a fraction of data center power draw. The inference chip market is projected to exceed $50 billion in 2026, up from $20 billion in 2025.

Now imagine Anthropic — or a hardware partner — takes the Cowork architecture and packages it into a purpose-built device. Not a general-purpose computer. A Cowork appliance. A box that sits on a desk or mounts in a server closet, runs the local execution environment, connects to Claude’s reasoning API over encrypted channels, and ships with industry-specific skill packages pre-installed.

The Industry-Specific Cowork Box

This is where the idea gets concrete. Consider what a tiered product line could look like.

A Cowork Personal — roughly the form factor and price point of a Mac Mini, $500-800 — ships with a capable ARM processor, 32 GB of unified memory, and the base Cowork runtime. It handles document processing, code execution, spreadsheet manipulation, email drafting, research synthesis. It is the knowledge worker appliance. It draws 15-25 watts under typical load. It replaces hundreds of cloud API calls per day with local execution. For a solo professional or small team, the device pays for itself within months through reduced API costs and faster iteration speed.

A Cowork Professional — $2,000-5,000 range — adds more memory, faster processing, local model caching for frequently used reasoning patterns, and the ability to serve a small team of 5-10 concurrent users. It ships with a category of industry skills pre-loaded. A law firm version comes with legal research, contract analysis, document review, and citation management skills. An accounting firm version includes tax code navigation, financial statement analysis, audit workpaper generation, and compliance checking. A marketing agency version ships with content pipeline, SEO analysis, social scheduling, and campaign reporting skills.

A Cowork Enterprise — $10,000-50,000 depending on configuration — targets hospitals, school districts, government agencies, and large professional services firms. This is rack-mountable. It serves 50-500 concurrent users. It includes on-premise model caching so frequently used reasoning patterns execute locally without cloud round trips. It ships with compliance certifications — HIPAA for healthcare, FERPA for education, CJIS for law enforcement, FedRAMP for federal agencies. The hospital version includes clinical documentation, diagnostic support, medical coding, and patient communication skills. The school district version includes curriculum development, IEP writing, student assessment analysis, and parent communication skills.

The key insight is that the skills layer — the organized instruction bundles that Anthropic already built into Cowork — becomes the product differentiation. The hardware is commodity. The Claude reasoning API is shared infrastructure. But the skills packages that make a Cowork box immediately useful for a specific profession on day one — that is where the value concentrates.

The Data Sovereignty Angle

There is a reason Qualcomm’s appliance tagline is “sovereign, highly security-focused.” Every industry that handles sensitive data has the same structural problem: they need AI capabilities but cannot send their data to the cloud.

Hospitals cannot send patient records to Anthropic’s servers for processing. Artisight, the only smart hospital platform with independent HIPAA certification for its full AI training and deployment process, runs entirely inside the hospital firewall. No PHI leaves the building. No patient data is stored externally. A Cowork healthcare appliance would follow the same model — all file manipulation, document processing, and data analysis happens on the local device. Only the reasoning queries go to Claude’s API, and those can be structured to exclude identifying information.

Law firms face identical constraints. Client privilege means case files cannot leave the firm’s network. As one legal tech analysis put it: “Get the security model in writing — where data lives, who can see prompts and outputs, and how audits work. If a vendor will not document it, they are not ready for your clients.” A Cowork legal appliance with local execution and auditable API calls solves this cleanly.

Schools handling student records under FERPA. Government agencies under FedRAMP. Financial institutions under SOX and SEC regulations. Nearly 84% of organizations now expect to run AI on-premises or at the edge alongside cloud. The market is not theoretical. It is waiting for a product that makes edge AI as easy as plugging in a router.

The Power Grid Implication at Scale

Run the numbers on what happens if even a fraction of AI workloads move to edge appliances.

If 10 million knowledge workers each shift 50 cloud inference calls per day to local Cowork execution, and each avoided call saves approximately 2.9 Wh of data center energy (the Schneider Electric per-query estimate), that eliminates 14.5 billion Wh per year — 14.5 GWh. That is modest. But knowledge workers are the small end of the scale. Healthcare alone has 22 million workers in the US. Education employs 7.4 million. Legal services employs 1.8 million. Financial services employs 6.6 million.

If 50 million professionals across regulated industries each run 100 tasks per day on local Cowork appliances instead of cloud — a conservative estimate given the iteration-heavy nature of professional work — the avoided cloud compute reaches 5.3 TWh per year. That is equivalent to the annual electricity consumption of approximately 500,000 US households.

This is not going to solve the AI energy crisis. Global AI electricity demand is heading toward 1,000 TWh by 2030. But it can meaningfully slow the growth rate of data center demand at a moment when the grid literally cannot keep up. And the economic incentives are aligned — organizations save money by running locally, users get faster performance, Anthropic reduces infrastructure costs, and the grid gets breathing room. Every player benefits.

Why Anthropic Should Build This

Anthropic is not a hardware company. But Apple was not a phone company. Amazon was not a cloud company. The most valuable technology companies become the thing they need to become to deliver on their core product vision.

Anthropic’s core product is Claude. Claude’s value increases when it can operate on more data, in more environments, with fewer restrictions. Every compliance barrier that prevents a hospital, law firm, or school from using Claude is lost revenue. Every inference call that could run locally but instead hits their GPU cluster is margin erosion. Every professional who needs a 30-minute Cowork session but cannot install a Linux VM on their locked-down corporate laptop is an unreachable customer.

A Cowork appliance solves all three problems simultaneously. It gives regulated industries a compliant deployment path. It offloads execution costs from Anthropic’s infrastructure. And it puts Claude on desks where corporate IT policy would otherwise block it — because the appliance can be approved, certified, and managed like any other piece of network equipment.

The skills marketplace becomes the recurring revenue engine. Sell the hardware at cost or near cost — the classic razor-and-blades model. Charge for the Claude API usage that powers the reasoning layer. And build an ecosystem where industry specialists create, certify, and sell domain-specific skill packages through an Anthropic marketplace. The healthcare skills package is maintained by healthcare AI specialists. The legal skills package is maintained by legal tech experts. Anthropic takes a platform cut on every skills subscription.

The infrastructure already exists. The Cowork runtime is built. MCP provides the connectivity standard. The Agent SDK provides the developer framework. The skills architecture provides the specialization mechanism. The only thing missing is the box.

What I Am Watching

Qualcomm’s AI On-Prem Appliance is shipping. NVIDIA’s Jetson Thor is in production. The inference chip market doubled in a single year. Anthropic added Windows support to Cowork in February 2026. Deloitte’s latest research says most AI compute will still run on expensive, power-hungry chips in large data centers — but the trend line is unmistakable.

If Anthropic announces a hardware partnership in the next 12 months, remember this article. The economics demand it. The grid demands it. The customers who cannot use cloud AI are demanding it. And the architectural foundation — Cowork’s edge execution model — is already built and running on millions of machines.

The question is not whether AI moves to the edge. The question is whether Anthropic leads that transition or watches someone else package their architecture into a product first.

Leave a Reply