The fundamental challenge in agentic commerce isn’t just measuring costs—it’s building predictive models that can decompose the complex, non-linear relationship between customer intent, agent decision-making, and resource consumption across heterogeneous API services.

Unlike traditional e-commerce transactions with fixed processing costs, AI agents exhibit highly variable computational patterns. A single customer interaction might trigger anywhere from 3 to 40+ discrete API calls, each with different latency characteristics, token consumption patterns, and downstream dependencies. This creates a multi-dimensional cost attribution problem that requires sophisticated modeling approaches.

The Multi-Agent Cost Attribution Problem

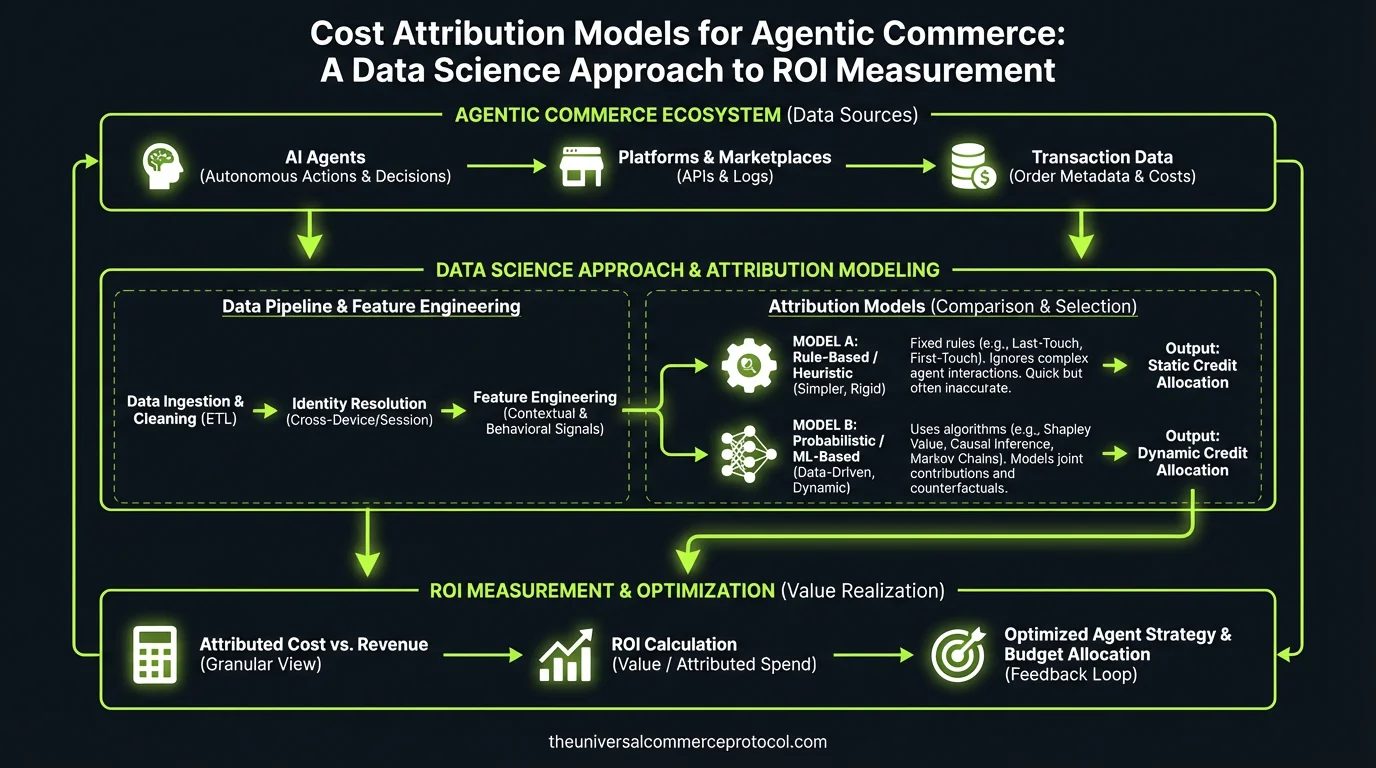

From a data science perspective, agent cost attribution is fundamentally a causal inference problem. We need to understand not just what an agent costs, but why certain interactions are expensive and how we can predict and optimize these costs.

Consider the feature space for a single transaction:

- Customer features: LTV segment, previous interaction history, device type, geographic latency

- Intent features: Query complexity, semantic similarity to training data, multi-turn conversation depth

- System features: Model temperature, retrieval-augmented generation (RAG) database size, concurrent load

- Temporal features: Time of day, seasonality, cache hit rates

Each of these dimensions affects the agent’s decision tree and resource consumption patterns. A high-LTV customer asking about a complex product configuration might trigger expensive semantic search operations, multiple LLM reasoning chains, and inventory system queries—while a returning customer with simple intent might resolve through cached responses.

Modeling Agent Decision Costs in Commerce

The key insight is that agentic commerce systems operate as hierarchical decision processes where each node in the decision tree has an associated cost distribution. Unlike traditional ML models with fixed inference costs, agents dynamically construct their computation graphs based on customer input and confidence thresholds.

Feature Engineering for Cost Prediction

Effective cost models require features that capture both the complexity of customer intent and the efficiency of agent reasoning:

Intent Complexity Signals:

- Token entropy in customer queries

- Semantic distance from product catalog embeddings

- Syntactic complexity (parsing depth, entity count)

- Multi-modal input presence (images, voice, structured data)

Agent Reasoning Patterns:

- Confidence scores at each decision point

- RAG retrieval precision/recall metrics

- Chain-of-thought reasoning depth

- Tool usage frequency (calculator, inventory lookup, pricing engine)

System State Features:

- Vector database cache hit rates

- LLM API latency percentiles

- Concurrent request load

- Regional inference costs

Universal Commerce Protocol (UCP) and Action Space Structure

UCP provides a standardized action space that constrains agent behavior, which is crucial for cost modeling. Instead of open-ended generation, agents operate within defined commerce primitives: product search, inventory check, price calculation, cart modification, checkout initiation.

This structured action space allows us to build action-specific cost models. Each UCP action has different computational requirements:

- product_search: High vector DB costs, variable LLM reasoning

- inventory_check: Deterministic API costs, low variance

- price_calculation: Complex business rules, potential external service calls

- checkout_flow: Payment gateway costs, fraud detection inference

By modeling costs at the UCP action level, we can build compositional cost functions that predict total transaction cost based on the agent’s planned action sequence.

Training Data and Model Architecture

Building reliable cost attribution models requires carefully constructed training datasets that capture the full diversity of agent interactions:

Data Collection Strategy

Request-level instrumentation: Every API call needs dimensional tagging with timestamps, latency, token counts, and business context. This creates a time-series dataset where each transaction is a sequence of (action, cost, context) tuples.

Hierarchical labeling: Costs operate at multiple levels—individual API calls, complete customer intents, full transaction flows, and customer lifetime interactions. Your feature engineering should capture cross-temporal dependencies.

Counterfactual data: Capture failed transactions, escalations to human agents, and timeout scenarios. These provide crucial signal about when agent costs become unbounded.

Model Architecture Considerations

Given the sequential nature of agent interactions, recurrent or transformer-based architectures often outperform traditional regression models for cost prediction. Consider:

- Sequence-to-scalar models: Predict total transaction cost from the sequence of customer inputs and agent actions

- Multi-task learning: Jointly predict cost, latency, and success probability

- Hierarchical models: Separate models for action-level costs and transaction-level aggregation

- Uncertainty quantification: Cost predictions need confidence intervals for resource planning

Evaluation Metrics and Monitoring

Traditional business metrics (conversion rate, AOV) don’t capture agent efficiency. Data scientists need metrics that reflect the unique characteristics of agentic systems:

Agent-Specific Performance Metrics

- Cost-per-completed-intent (CCI): Total agent cost divided by successfully resolved customer intents

- Marginal cost efficiency: How cost scales with interaction complexity

- Escalation cost burden: Expected cost when agents fail and require human intervention

- Temporal cost stability: How consistent are costs across different load conditions

Model Evaluation Framework

Backtesting on held-out time periods: Agent costs exhibit temporal drift as models are updated and customer behavior shifts. Evaluate model performance on future time windows, not random splits.

Stratified evaluation by customer segment: Cost patterns differ dramatically between customer types. A model that works well for high-intent purchasers might fail for browsing behavior.

Sensitivity analysis: How do cost predictions change with different agent configurations, model temperatures, or timeout thresholds?

Research Directions and Optimization

Several research opportunities emerge from the intersection of agentic AI and cost modeling:

Adaptive Cost-Aware Routing

Can we train agents to be cost-conscious in their reasoning? Instead of always choosing the most accurate approach, agents could balance accuracy against computational cost based on customer LTV and margin thresholds.

Hierarchical Cost Allocation

How do we fairly distribute infrastructure costs (model serving, vector databases, monitoring) across different customer interactions? This is particularly complex in multi-tenant systems where agents serve different merchant verticals.

Causal Cost Attribution

Which specific customer behaviors or system configurations cause high-cost interactions? Moving beyond correlation to causal understanding enables targeted optimization interventions.

Experimental Framework for Data Scientists

To validate cost attribution models in production, data scientists should design controlled experiments that isolate different cost components:

A/B test agent configurations: Compare cost distributions across different model architectures, prompt strategies, or confidence thresholds. Measure both immediate costs and downstream effects on customer satisfaction.

Cohort analysis by cost tier: Segment customers by predicted interaction cost and analyze long-term retention and LTV patterns. Do high-cost interactions actually correlate with better customer outcomes?

Counterfactual cost estimation: For each agent interaction, estimate what the cost would have been with human support. This provides ground truth for ROI calculations.

Feature ablation studies: Which input features most strongly predict high-cost interactions? Can you build interpretable models that help business stakeholders understand cost drivers?

Temporal stability analysis: How do cost models degrade over time as customer behavior shifts and agent models are updated? Build monitoring pipelines that detect when model retraining is needed.

FAQ

How do you handle the cold start problem when building cost models for new agent configurations?

Use transfer learning from similar agent architectures and bootstrap with synthetic data based on API pricing models. Start with simple parametric models (linear regression on token counts) and gradually incorporate more complex features as interaction data accumulates. Consider Bayesian approaches that can quantify uncertainty in cost predictions for new configurations.

What’s the best way to model the heavy-tailed distribution of agent costs when most interactions are cheap but some are extremely expensive?

Use robust loss functions (Huber loss, quantile regression) that don’t over-penalize outliers. Consider mixture models that explicitly separate “normal” vs “escalated” interaction modes. For budgeting purposes, focus on modeling the 95th and 99th percentile costs rather than just mean costs.

How can you build causal models to understand which agent design choices actually drive costs vs which are just correlated?

Design controlled experiments where you randomly assign customers to different agent configurations and measure cost differences. Use instrumental variables (like random A/B tests of prompt strategies) to identify causal effects. Difference-in-differences analysis can help when you can’t randomize at the customer level.

What evaluation metrics best capture whether cost attribution models will generalize to future agent architectures?

Focus on out-of-time validation rather than random splits, since agent architectures and customer behavior both drift over time. Measure prediction intervals, not just point estimates. Test model performance on edge cases and adversarial inputs that might become more common with different agent designs.

How do you balance model interpretability with prediction accuracy when stakeholders need to understand cost drivers?

Use hierarchical models where simple, interpretable models handle the majority of interactions and complex models only activate for edge cases. SHAP values work well for explaining individual cost predictions. Consider building separate “diagnostic” models optimized for interpretability alongside “production” models optimized for accuracy.

This article is a perspective piece adapted for Data Scientist audiences. Read the original coverage here.

What makes cost attribution in agentic commerce different from traditional e-commerce?

Unlike traditional e-commerce with fixed processing costs, AI agents exhibit highly variable computational patterns. A single customer interaction can trigger anywhere from 3 to 40+ discrete API calls, each with different latency characteristics, token consumption patterns, and downstream dependencies. This creates a multi-dimensional cost attribution problem that requires sophisticated modeling approaches rather than simple fixed-cost calculations.

Why is cost attribution considered a causal inference problem?

Cost attribution in agentic commerce is fundamentally a causal inference problem because we need to understand not just what an agent costs, but why certain interactions are expensive and how we can predict and optimize these costs. This requires moving beyond simple observation to understanding the causal relationships between customer intent, agent decision-making, and resource consumption.

What are the key feature categories that influence agentic commerce costs?

There are four main feature categories: Customer features (LTV segment, interaction history, device type, geographic latency), Intent features (query complexity, semantic similarity to training data, conversation depth), System features (model temperature, RAG database size, concurrent load), and Temporal features. Understanding these features helps predict and decompose the complex relationships between customer interactions and resource consumption.

How can predictive models help optimize ROI in agentic commerce?

Predictive models that decompose the complex, non-linear relationships between customer intent, agent decision-making, and resource consumption can identify which interactions are most expensive and why. This enables data-driven optimization strategies to reduce computational overhead while maintaining service quality, ultimately improving ROI measurement and cost efficiency.

What challenges arise from variable API call patterns in AI agents?

Each API call within an agent’s workflow has different latency characteristics, token consumption patterns, and downstream dependencies. The variability in the number of API calls triggered by a single interaction (3 to 40+ calls) makes it difficult to apply simple cost allocation methods, requiring instead sophisticated multi-dimensional modeling approaches to accurately attribute costs.

Leave a Reply