Your commerce AI agent processes 50,000 customer interactions daily across product discovery, pricing negotiations, and order fulfillment. Unlike supervised learning models where you evaluate against held-out test sets, these agents operate in dynamic environments where the same input can yield different—yet potentially valid—outputs.

The core challenge isn’t just monitoring system performance; it’s understanding model behavior in a non-stationary problem space where optimal actions depend on real-time inventory, customer context, and multi-agent coordination dynamics.

The Multi-Agent Inference Problem

Commerce agents operate in what we might call a “contextual multi-armed bandit with delayed feedback and non-stationary rewards.” A customer query like “find me running shoes under $120” triggers a cascade of model decisions:

The intent classification model parses the query structure and extracts constraints. The retrieval model searches product embeddings against the query embedding. The ranking model scores products based on predicted customer preferences. The pricing agent evaluates dynamic pricing rules and inventory velocity. The negotiation agent determines discount thresholds based on customer lifetime value predictions.

Each decision point introduces potential error propagation. A misclassified intent (false positive rate of 3% on constraint detection) compounds downstream into irrelevant product retrievals, which the ranking model must then attempt to salvage through relevance scoring.

Traditional accuracy metrics become meaningless here. What matters is the end-to-end utility: did the customer purchase something, and was that purchase decision economically optimal for both parties?

How Unified Commerce Protocols Structure the Solution Space

The observability challenge becomes tractable when we think of commerce protocols as defining the action space for our agents. Rather than unlimited text generation, agents operate within structured API constraints that limit the decision space.

Consider a pricing negotiation agent. Without protocol constraints, it might generate arbitrary responses (“I’ll give you a 90% discount”). With UCP, it operates within defined parameters: discount_threshold_max, inventory_velocity_factor, customer_segment_multiplier. This transforms an open-ended language modeling problem into a constrained optimization problem.

Feature Engineering Opportunities

UCP protocols expose rich feature engineering opportunities that traditional e-commerce logs miss:

Agent state features: confidence scores, tool invocation sequences, fallback trigger rates, multi-step reasoning chains. Context features: inventory freshness timestamps, pricing volatility measures, cross-agent communication patterns. Environmental features: API latency distributions, concurrent user loads, payment gateway success rates by region.

These features enable you to model agent performance as a function of environmental conditions—critical for understanding when and why agents fail.

Model and Data Considerations

The training data problem for commerce agents differs fundamentally from standard NLP tasks. Your training corpus includes:

Historical customer interaction logs (but with privacy constraints and selection bias toward completed transactions). Synthetic conversation data (but with potential distribution shift from real customer language patterns). Human expert demonstrations of complex scenarios (but limited volume and potentially inconsistent labeling).

The data sparsity problem is acute for edge cases: refund negotiations, bulk order approvals, cross-border shipping complications. These scenarios represent <1% of your training data but generate 20% of customer complaints.

Model Architecture Implications

Most commerce agents implement some variant of ReAct (Reasoning + Acting) patterns, where language models generate intermediate reasoning steps before API calls. This creates unique observability requirements:

You need to log not just the final API call, but the reasoning chain that led to it. Token-level confidence scores become critical—a single low-confidence token in a price calculation can propagate into a $10,000 ordering error. Multi-model coordination (LLM + specialized pricing models + inventory systems) requires careful attention to feature drift between components.

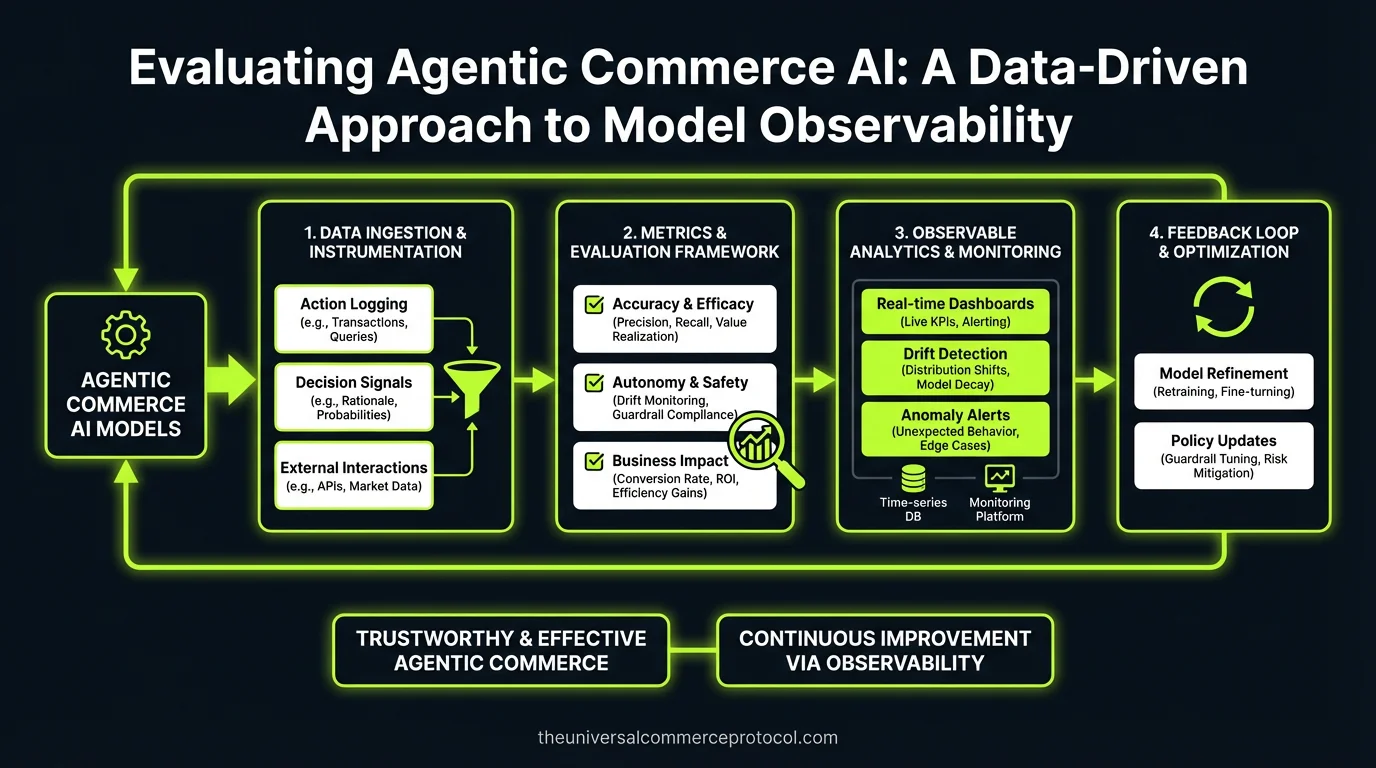

Evaluation and Monitoring Frameworks

Standard ML evaluation metrics (accuracy, F1, AUC) don’t capture what matters in commerce: business outcomes, customer satisfaction, and economic efficiency. You need multi-dimensional evaluation frameworks.

Online Evaluation Metrics

Task completion rate: Percentage of customer intents successfully resolved without human escalation. Economic efficiency: Revenue per interaction, cost per successful transaction, margin preservation during negotiations. Error propagation analysis: How often does an error in one agent (pricing) cascade to failures in downstream agents (payment processing).

Latency distribution analysis: P95 response times broken down by reasoning step—is the delay in product retrieval or in LLM inference?

Offline Evaluation Approaches

Counterfactual evaluation becomes critical. When your pricing agent offers a 15% discount, you need to estimate: would a 10% discount have achieved the same conversion? What’s the confidence interval on that estimate given your historical data?

Implement shadow mode evaluation: run multiple agent versions simultaneously, log all decisions, but only execute one. This generates comparison data for A/B testing complex multi-step workflows.

Research Directions and Open Problems

Several research opportunities emerge from commerce agent observability:

Causal inference for multi-agent systems: How do you attribute a conversion or failure to specific agent decisions when multiple agents interact? Drift detection in reasoning patterns: Traditional ML drift detection focuses on input features. Agent reasoning patterns can drift in ways that standard statistical tests miss. Optimal stopping for agent negotiations: When should a negotiation agent stop offering concessions? This is a classic explore-exploit problem but with customer satisfaction as a constraint.

Experimental Framework for Data Scientists

Start with these experiments to build robust commerce agent evaluation:

Trace-level error attribution: Build a classifier that predicts transaction failure probability from early agent decisions. Use SHAP values to identify which reasoning steps most strongly correlate with downstream failures.

Counterfactual pricing analysis: Implement doubly robust estimation to measure the causal impact of agent pricing decisions on customer conversion. Control for selection bias in your transaction logs.

Multi-agent coordination efficiency: Measure information flow between agents. Are agents making redundant API calls? Can you identify coordination failures that increase latency without improving outcomes?

Reasoning chain validation: Sample agent reasoning chains and have human experts label them for logical consistency. Train a separate model to predict reasoning quality—this becomes your “agent reliability score.”

FAQ

How do you handle the cold start problem when evaluating new agent versions?

Use Thompson sampling with contextual bandits. Start with a small traffic allocation (5%) and increase based on confidence bounds around your success metrics. Pre-train on synthetic scenarios that cover edge cases your historical data lacks.

What’s the best way to detect concept drift in multi-agent commerce systems?

Monitor joint distributions of agent decision sequences, not just individual model outputs. A shift in customer language patterns might not trigger standard feature drift alerts but will show up in intent classification → product retrieval → pricing decision patterns.

How do you evaluate agent performance when ground truth is subjective?

Implement multi-rater evaluation with domain experts, then model inter-rater agreement. Use techniques like MACE (Multi-Annotator Competence Estimation) to weight expert judgments. For customer satisfaction, use delayed feedback loops—survey customers 48 hours post-transaction.

What sample size do you need for statistically significant A/B tests of agent modifications?

This depends heavily on your baseline conversion rates and minimum detectable effect size. For commerce agents, expect to need 10,000+ interactions per variant to detect 2-3% improvements in task completion rates. Use sequential testing to reduce sample requirements.

How do you balance model interpretability with performance in commerce agents?

Implement a two-tier architecture: use high-performance models (fine-tuned LLMs) for decision-making, but train separate interpretable models (gradient boosted trees) on the same features to provide explanations. The explanation model doesn’t need to perfectly match the decision model—it needs to capture the main drivers of agent behavior.

This article is a perspective piece adapted for Data Scientist audiences. Read the original coverage here.

What makes evaluating commerce AI agents different from traditional machine learning models?

Commerce AI agents operate in dynamic, non-stationary environments where the same input can produce different yet valid outputs, unlike supervised learning models evaluated against fixed test sets. This requires understanding agent behavior across real-time inventory, customer context, and multi-agent coordination rather than simple performance metrics.

How does error propagation affect commerce AI performance?

Commerce agents involve cascading decision points across multiple models—intent classification, retrieval, ranking, pricing, and negotiation. A small error at one stage (such as a 3% false positive rate in constraint detection) compounds downstream, leading to irrelevant product retrievals and misaligned recommendations that impact overall agent effectiveness.

What is a “contextual multi-armed bandit with delayed feedback” in commerce AI?

This describes the core inference problem in commerce agents: they must make optimal decisions based on real-time context (inventory, customer preferences, pricing rules) while receiving delayed feedback on outcomes and operating in non-stationary reward environments where optimal actions continuously shift.

What decision points are involved when a customer searches for products?

A single query triggers multiple model decisions: intent classification (parsing constraints), retrieval (searching product embeddings), ranking (scoring based on preferences), pricing evaluation (dynamic pricing and inventory velocity), and negotiation (determining discount thresholds based on customer lifetime value).

Why is model observability critical for commerce AI systems processing large-scale interactions?

At scale (50,000+ daily interactions), small errors and behavioral issues become amplified. Observability allows you to track agent behavior across the non-stationary problem space, identify error propagation sources, and understand how real-time factors like inventory and customer context affect decision quality.

Leave a Reply